Conf’luence Laurent Hascoet – INRIA, Sophia-Antipolis, Nice.

Mardi 15 Octobre à 10 h 30 Amphithéâtre Nougaro

Algorithmic Differentiation (AD) is a family of program transformation techniques aimed at computing derivatives. Given a program P that computes a mathematical function F, an AD tool builds a code P’ that computes some derivatives of F.

• We will describe the most frequently needed sorts of derivatives, with a particular focus on gradients (also known as adjoints).

• We will describe the most popular strategies for AD (the AD models) and the corresponding sorts of AD tools.

• We will discuss the present limitations of AD and the current research to lift them.

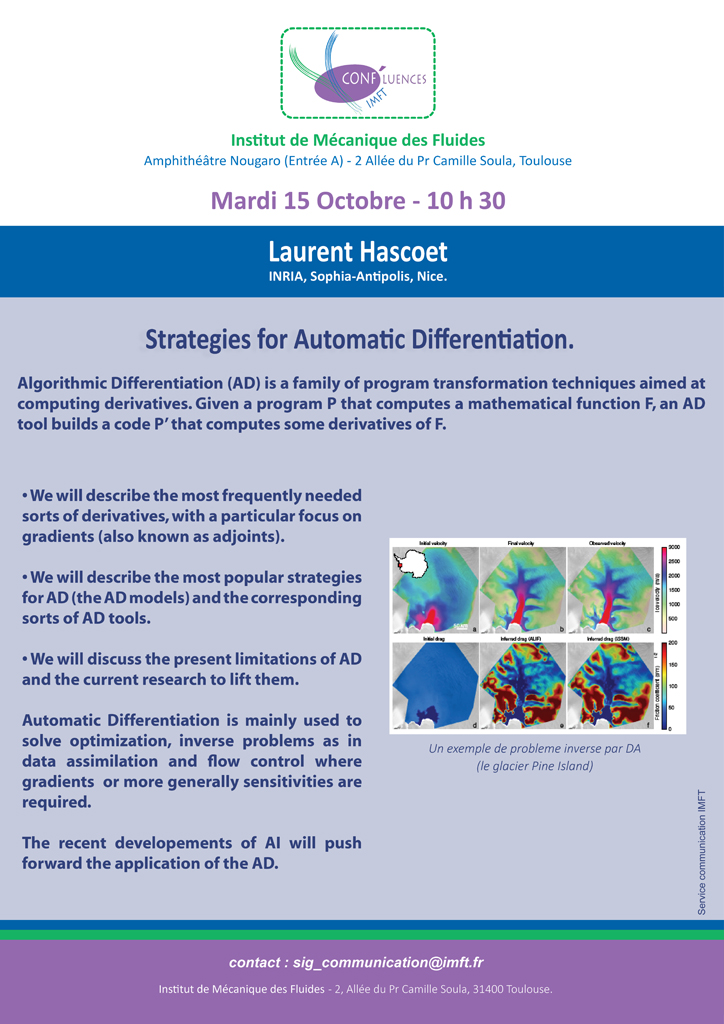

Automatic Differentiation is mainly used to solve optimization, inverse problems as in data assimilation and flow control where gradients or more generally sensitivities are required.

The recent developements of AI will push forward the application of the AD.